Banks are increasingly relying on

Even before the details of Facebook’s Cambridge Analytica scandal became public, there were many questions about banks' use of data from external, unstructured sources.

While banks have long relied on proprietary data to make business decisions, not all are investing properly in the capabilities to verify these new sources of data, said Alan McIntyre, senior managing director and head of Accenture’s banking practice.

“Inaccurate, unverified data will make banks vulnerable to false business insights that drive bad decisions,” said McIntyre. “Banks can address this vulnerability by verifying the history of data from its origin onward — understanding the context of the data and how it is being used — and by securing and maintaining the data.”

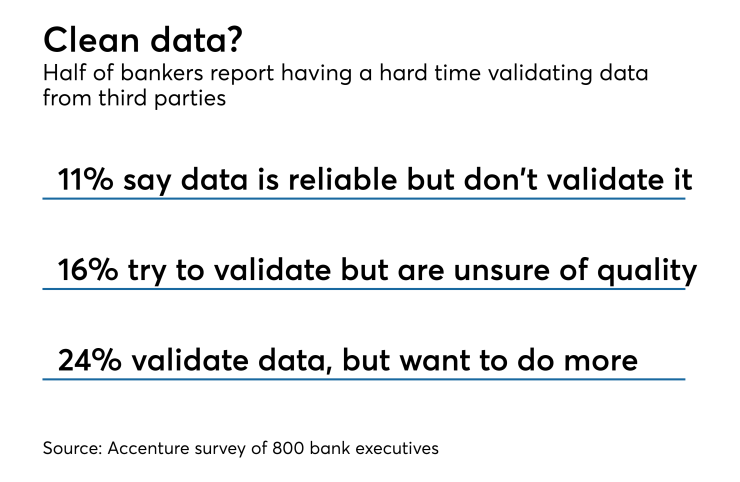

According to the firm’s Banking Tech Vision 2018 report released last week, 28% of the 800 bankers surveyed said that they do not validate or examine the data they receive from outside sources "most of the time," while 24% validate the data but recognize they should do a lot more to ensure quality.

The issue is distinct from cybersecurity — banks have a responsibility to keep bad actors from accessing customer data, McIntyre said. Rather, it is about ensuring the explosion of external data used in areas like marketing accurately reflects customer sentiments and preferences, he said.

McIntyre used Yelp as an example: Do bad reviews of a restaurant “truly reflect the sentiments of consumers, or is it being manipulated by competitors?”

The challenge reflects how banks are starting to face some of the same issues tripping up social media companies that aggregate

Just as banks have dedicated know-your-customer protocols, McIntyre says, they "will also need know-your-data processes ... to show the provenance of this data to regulators."

McIntyre says data-focused regulation like the

Banks should be asking more from their data vendors, said Roy Kirby, senior product manager for SIX, a Zurich-based financial data provider that is owned by a conglomerate of banks.

“Traditionally, banks have taken on too much of the burden for regulatory response; from sourcing raw data, building business rules and logic, and applying data management infrastructure and resources, all the way through to integrating existing procedures with new reporting tools and compliance mandates,” Kirby said.

"In today’s world, this way of doing things simply doesn’t scale. Inefficient procedures can have a significant negative impact on regulatory response and can hamper banks’ ability to continue with existing business models.”

Many financial institutions are more concerned with the speed with which they can get data, and that sometimes means data quality gets overlooked, said Barry Star, CEO and founder of Wall Street Horizon, a provider of corporate data.

“Bad data at the speed of light is still bad data,” Star said by email. “The key to high data quality is not a secret — it’s about paying attention to detail, understanding how to mine data as close to the primary sources as possible, and understanding that data and information are not the same.”