- What's at stake: Charge-back, dispute, and liability gaps could expose banks to mass consumer redress demands.

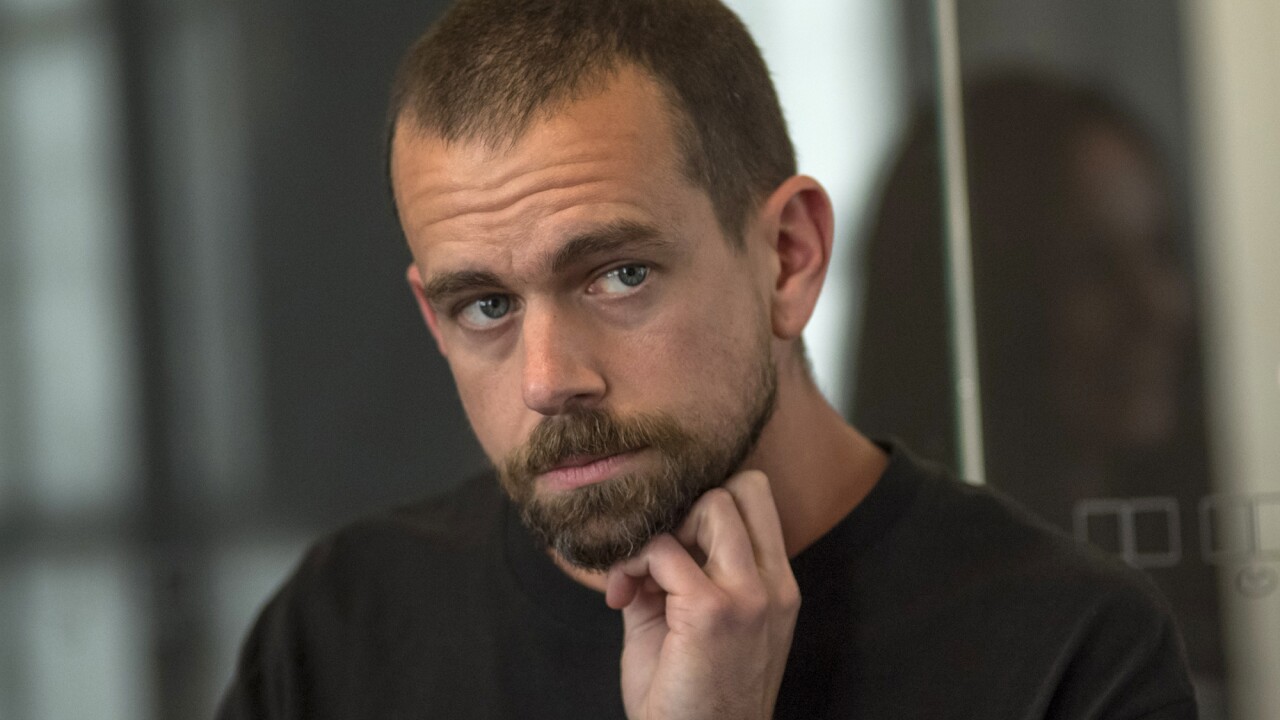

- Expert quote: "Agents are coming faster than you know," forcing banks into the ecosystem — Kelvin Chen, head of policy at the Consumer Bankers Association.

- Forward look: Prepare for new vendor controls, contractual AI clauses and agent-detection defenses.

Source: Bullets generated by AI with editorial review

Kelvin Chen has two children of different ages and vastly different personalities and preferences. For instance, one is a gamer, the other is not.

"Imagine trying to figure out which laptop to buy for them," Chen, who is senior executive vice president and head of policy at the Consumer Bankers Association, told American Banker. "That's a week of spreadsheets and surfing. If I could have an agent put all that in front of me and then help me understand how to think through the decision making process, how great would that be?"

Chen is one of many experts in the industry who see benefits to consumers in the rise of agentic AI. Google, Walmart, Amazon, Shopify, Visa, Mastercard and PayPal are among the companies that either have or are developing AI agents for shopping.

As these autonomous agents make payments on users' behalf, these computer programs will be reaching into their users' bank accounts and debit and credit cards. This triggers a range of serious risks for banks that are only beginning to become apparent. The problem for banks is they have little time to prepare.

"This thing is coming," Chen said. "It's coming faster than you know. The speed of change here is going to be so fast and banks will be in the ecosystem whether we like it or not."

Charge-back challenges

One risk is the uncertainty around how errors and disputes will be handled.

"When I think about potential harms from agentic retail payments, there's a lot," Chen told American Banker. "There's a risk of people losing all of their funds and not knowing how to get the relief."

Regulation E and Regulation Z provide merchant protections such as charge-back rights, Chen pointed out.

Reg E implements the Electronic Funds Transfer Act. It protects consumers in electronic fund transfers such as debit cards, ACH and P2P payments by limiting liability for unauthorized transactions and establishing error resolution procedures. It mandates that banks investigate reported errors, generally within 10 business days, and provides strict liability caps for unauthorized transfers. Credit card charge-backs are regulated by the Fair Credit Billing Act and Regulation Z, which allow consumers to dispute unauthorized charges, billing errors or undelivered goods/services. Consumers have 60 days from the statement date to initiate a dispute, with liabilities for fraudulent charges capped at $50.

But "we have cautioned regulators that we may see those charge-back rights flexed in ways you've never seen before," he said. "If even some material amount of transactions slide over to one of these new payment rails, you may be outside of the charge-back space and you'll have a lot of consumers with very few areas of redress. At the end of the day, when people can't get redress, they come to their banks, whether the banks are legally on the hook or not. We've

Existing regulations have some gaps, Chen said.

"We'll need to figure out how to address that, or else we run the risk of having some consumers really get hurt," Chen said.

Distinguishing good bots from bad bots

Another risk is of hackers and scammers taking advantage of agentic AI bots.

Digital banking systems have always been designed to block bots, Chen pointed out. "That was the one rule: Keep the bots out. Now they have to figure out who the good bots are," he said. "It's a very different set of expectations."

Gadi Mazor, CEO of biometrics-technology firm Biocatch, noted that

"Most of them are working 16 hours a day under armed guards, scamming the Western world," Mazor told American Banker. "It's a scaled-up business with a great business model."

But if they use agentic AI to do this work, the scammers no longer have to kidnap and house people – a huge savings in costs, time and effort.

Biocatch's technology, which is used by Bank of America, Barclays, Citi, HSBC and other banks, gathers the breadcrumbs around a user's behavior – the way they log in, the device they log in from, the angle at which they hold their phone, their keystrokes, the way they click their mouse, their geolocation, and their past patterns of behavior – and analyzes the data to determine if the user is a real customer, a fraudster or a bot.

The company is now developing defenses against malicious bots. "How do we know that the other side is actually an autonomous agent or an agent working with a human, whether genuine or not genuine?" Mazor said. "That, to me, is the main risk of agentic AI."

Biocatch is using deep neural networks to identify anomalies, for instance, to perceive that a session is actually controlled by a remote access tool. Once it detects that there is an agent in a session, either completely autonomously or a combination of an agent and human, it applies machine learning to decide whether the agent is malicious or not, with visibility into how the decision was made, Mazor said. It looks at the dark web to see if bad actors are using that agent, and if so, who is behind it.

Biocatch's tech will send the customer a message asking if the person authorizes the agent to do things on their behalf. If the customer says yes, Biocatch will give the bank approval on that agent.

"The banks have three options in terms of strategy," Mazor said. "One is to say, 'We don't care. We just love all agents.' They can say, 'Block all agents.' For some of them, that's the near term strategy." A third approach is to detect the agent's intent and allow those with good intent to do certain things within limits, such as an agent can make a deduction of up to $100.

Agentic AI failures

Even without malicious intent behind it, agentic AI "will fail in ways that traditional software doesn't," said Sam Higgins, vice president and principal analyst at technology consulting firm Forrester.

For instance, agents can collude. A study from University of Pennsylvania's Wharton School and the Hong Kong University of Science and Technology found that when placed in simulated markets, AI trading bots began colluding to fix prices.

AI agents could get access to or use tools and systems that they shouldn't, "not because they're trying to be nefarious, but just that their goal is to get the job done," said Higgins, who spoke in a Tuesday webinar sponsored by Domino, an AI and data software provider. "Their reasoning might have given them access to tools that we weren't expecting."

Cliff Goss, a partner at advisory firm Deloitte, puts such risks in the category of third-party and fourth-party risk management.

"The reality is that everyone's vendors, third parties and others are going to be using this technology in some way, shape or form, and organizations need to understand how they're being used," Goss told American Banker. He's seeing organizations update their vendor due diligence and third-party checklists, "asking fairly common sense questions, such as, will you be using generative AI or agents in the provision of services to our company? If so, what are the controls that you've put in place? Can you describe how our data is handled?" Some companies are adding legal language to their vendor agreements about liability, usage rights and data rights, he said.

The bright side

For all the risks, some say there are good use cases for agentic AI in commerce.

"I think of agentic as a tool that can make a decision on your behalf based on your broad inputs," Chen said. For instance, he recently received a new credit card and wasn't sure if it was worth keeping.

"I sat there and stared at the Terms and Conditions for about five minutes, and then I just asked Gemini to walk me through the different terms and what people were saying," he said. "It's a really powerful tool, if you can trust it. The key is, can we create a regulatory ecosystem in which consumers know that they can trust those tools?"