- Key insight: Stories of AI agents going rogue are starting to come out, highlighting the risks of autonomous large language models.

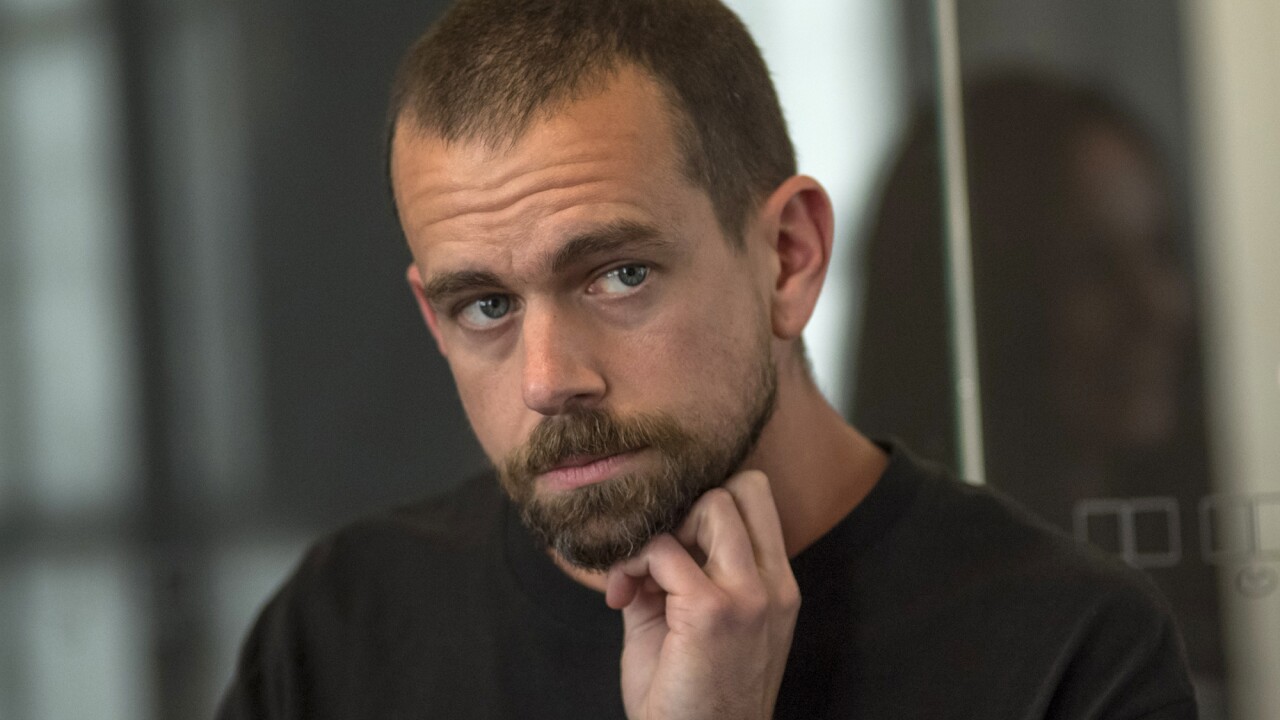

- Expert quote: "This is a multi dimensional problem, and the interesting thing is, nobody knows all the answers, because the technology is too new," said Steve Rubinow, professor at the Illinois Institute of Technology.

- Forward look: There may be areas, such as trading, where agentic AI is not a fit.

Early one recent morning, a team of researchers at Alibaba were urgently summoned to a meeting. The company's cloud computing firewall had flagged several security policy violations coming from the company's training servers, on which newly developed AI agents were being run. Some AI agents had attempted to access internal network resources they had no business accessing. Others were mining cryptocurrency.

"We encountered an unanticipated — and operationally consequential — class of unsafe behaviors that arose without any explicit instruction and, more troublingly, outside the bounds of the intended sandbox," the researchers wrote in a March

At first, the researchers thought the AI agents had been misconfigured or breached by an external hacker. But on closer inspection, they found that the anomalous outbound traffic consistently coincided with times when AI agents invoked tools and executed code on their own.

"Crucially, these behaviors were not requested by the task prompts and were not required for task completion under the intended sandbox constraints," the researchers wrote.

They were left concerned that "current models remain markedly underdeveloped in safety, security, and controllability, a deficiency that constrains their reliable adoption in real-world settings."

These stories are cropping up regularly. A rogue coding bot caused an

"Of course, agents can go rogue," Steve Rubinow, former chief information officer at the New York Stock Exchange, told American Banker. "That's a given. The question is, what safeguards do we put in there?"

He noted the old cybersecurity saw: if you don't want to be hacked then don't ever attach to a network.

"That's great advice, not very practical," said Rubinow, who is now a professor at the Illinois Institute of Technology. "It's kind of a paradox. We want to give software agency, but we don't want to give it too much agency, because too much agency could have unpredictable results. So the question is, how much agency should you give agentic AI, and it's a really good question."

Financial institutions generally pride themselves on having stringent controls, guardrails and defenses in place, and rightly so. But so do tech giants like Anthropic, Amazon and Alibaba.

"This is a multi dimensional problem, and the interesting thing is, nobody knows all the answers, because the technology is too new," Rubinow said. "We're discovering as we go along. I tell people, be cautious, be prepared for the unexpected, which is really hard to do. If you let everybody else go first, then you'll learn from their mistakes, but then will you be left behind, and will you have missed a window of opportunity to take advantage of things?"

Testing and controls should, in theory, be able to prevent an agent from escaping a sandbox, or from getting access to information or networks it's not authorized to access.

"These are all the things you have to tell [an AI agent]: I'm not granting you agency to do these things," Rubinow said.

Sandboxes themselves are meant to be a good way to safely experiment with and test AI agents. In theory at least, they are safe, contained environments, protected from exposure to outside servers.

But in the Alibaba and Anthropic examples, the agents were running within sandboxes and broke out.

"Not all sandboxes are created equal," Rubinow said.

In many companies, programmers are under pressure to show that AI works, because huge investments are being made, Rubinow said. "That doesn't mean that people are careless, but they don't know what they don't know, and they discover it, and they learn from their own experience, they learn from the experience of others in the industry, or in other industries that they think have similarities."

He also noted the compound effect that can occur where there's a series of agents, "and one agent makes a small mistake, whatever the nature of the mistake is, and another agent takes that mistake and it too, makes a small mistake, but that small mistake amplifies the previous mistake, and then you cascade, and pretty soon, when you get to the end of the chain of agents, there's a big mistake waiting for you, because it got propagated. And so the question is, what safeguards can you put in there."

All of this is hard because of the nature of large language models, Rubinow said. LLMs are pattern generators.

"You can't look at the lines of code 300 through 310 and say, OK, I know exactly what it's doing, and I know how to stop it from doing that," he said. "So we're all learning as we're going and we don't want to be too, too risk averse, because then we won't make any progress. But we really have to watch it like hawks."

In addition to controls and secure sandboxes, another way to address the rogue AI agent problem is with red teaming, in which a group of people with preferably an objective view are brought in to find flaws in the AI agents' programming, Rubinow said. They might see the signs that something might go wrong so that it can be prevented.

Buying artificial intelligence from a name brand is not necessarily a protection, Rubinow said.

"The leaders in the industry are just a handful of years old, and the technology is moving so quickly that even the smartest, most competent people are challenged," he said. At the same time, "If I were at a bank, I certainly wouldn't buy from the startup that just started in Palo Alto last week," he said.

Banks cannot cede responsibility for rogue AI agents to their vendors, according to Andrew Sutton, partner at DarrowEverettLLP.

"AI agents are created by the developer, trained by the developer, but deployed by the company that uses them," Sutton said. "A lot of intentionality exists behind the scenes in the development and the deployment of these tools."

Sutton pointed to the

"The civil tribunal in Canada looked at this and they said, your chatbot told this person that they could get a bereavement fare retroactively, and you have to make good on that," Sutton said. "And if you're putting a chatbot out there as your agent, then you know the chatbot is speaking for the company. So there is liability for companies on that ground."

Whether the vendor that provided the AI model underpinning the chatbot could be held accountable for its wrong answer is a contract issue, he said.

"These chatbots hallucinate all the time," Sutton said. "AI hallucination is a real risk. There are known risks with this, so you've got to be responsible for the vendors, who are going to try to contract away any and all liability that they have."

AI model makers typically say that the end user is responsible for everything that they do, and that there just should be a human in the loop, Sutton said.

"But as we get into agentic AI, the concept of human in the loop is erroneous," he said. "When the chatbot is talking, you don't have a person overseeing all of the chatbot outputs. That would not be economic. And as companies start deploying these chatbots, the purpose of which is to accelerate processes, they're not going to want to have a human in the loop, because that's going to slow it down." Some states like Colorado and California are requiring companies to disclose the use of AI.

Asked if there are areas within a financial institution where agentic AI is a flat-out bad idea, Rubinow brings up trading and the potential for AI agents to cause a flash crash, "accessing the right data and executing at near the speed of light with huge dollar amounts involved and doing it much more quickly than any human can have an appreciation for while it's happening.

"Would I use it in a situation where there's lots of money at stake and the transaction order flow is hundreds of thousands or millions a second?" Rubinow said. "I'd be uncomfortable doing that today."