- Key insight: The IMF's May 7 post reframes Mythos and similar AI models as systemic risks to the financial system, not just operational problems at individual firms.

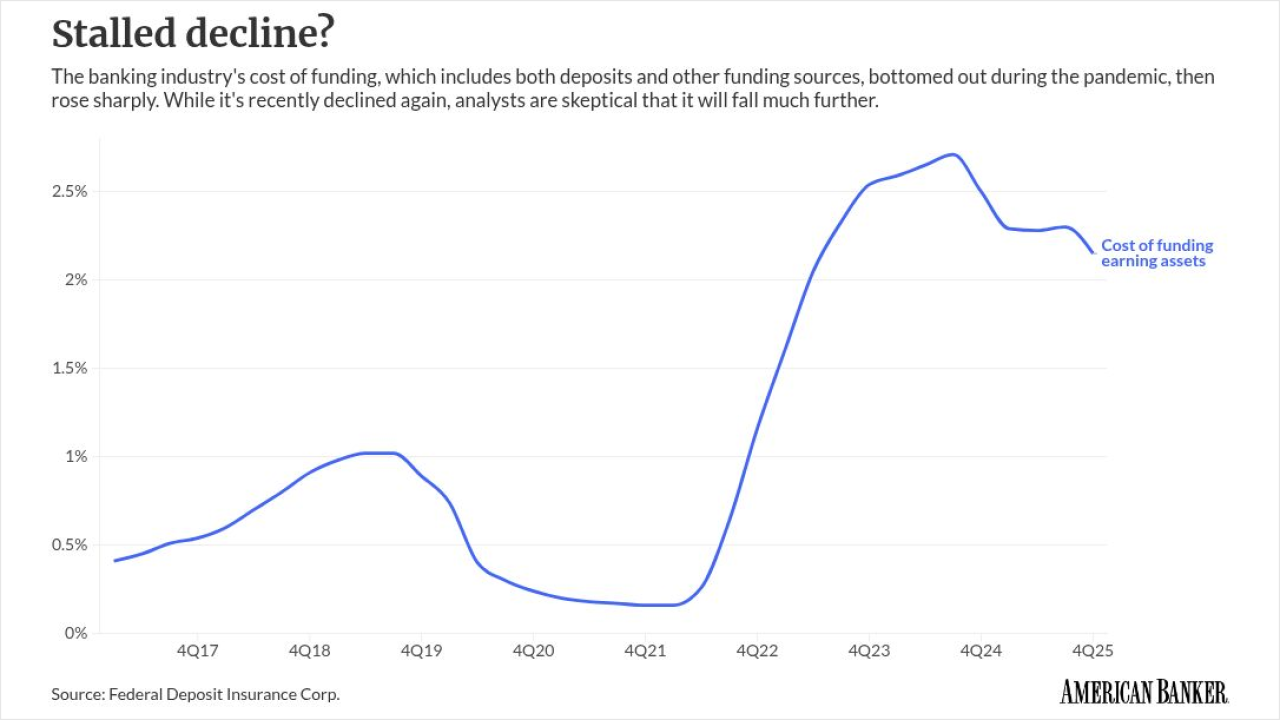

- What's at stake: Banks rely on shared cloud, software and payment infrastructure, so a single AI-powered breach at a major vendor could spread across the financial system.

- Supporting data: Mozilla said Mythos identified 271 vulnerabilities in a single evaluation run of Firefox, compared with 22 bugs from a prior collaboration using Claude Opus 4.6.

Overview bullets generated by AI with editorial review.

The International Monetary Fund last week called on regulators to treat artificial intelligence models such as Anthropic's Mythos as a systemic risk to the financial system rather than an operational problem at particular firms.

Three senior IMF officials wrote in a

The IMF officials prescribed resilience. Whereas prevention keeps attackers out, resilience keeps the financial system running when prevention fails.

To date, the entire U.S. regulatory response to Mythos has amounted to

Bowman addressed Mythos at a Financial Stability Oversight Council roundtable on April 27, where she said the Fed would "continue to consider effective supervisory approaches" as the technology develops.

However, no public guidance came out of the speech.

The IMF started to fill the gap last week. Its post gestures at a potential policy road map: a

A bank preemptively following the paper's suggestions to supervisors would run red-team exercises simulating attacks powered by frontier-AI tools, tighten incident-response plans such that a compromised system can be isolated before damage spreads and make boards explicitly responsible for cyber strategy.

From an operational issue to a systemic one

Bank supervisors largely treat cyber risk as an operational problem at individual firms. The IMF post is part of a campaign by regulators across the world to reframe cyber risk as a sector- and economy-wide challenge.

Cyber risk now entails "correlated failures that could disrupt financial intermediation, payments, and confidence at the systemic level," the fund said. Three forces, it said, drive that shift.

First, AI lets attackers find and exploit software flaws at machine speed. Second, banks run on shared infrastructure: software, cloud services, payment networks. Third, a handful of providers dominate that infrastructure, so one weakness can spread across many firms.

The IMF's recent post sits atop years of IMF research that suggests cyberattacks pose growing systemic risks to global finance.

For example, the fund's

The 2024 report attributed the increase to rising digital connectivity, more sophisticated attacks and geopolitical tensions (as evidenced by, for example, the surge in cyberattacks after Russia's invasion of Ukraine).

That 2024 report identified three channels through which a cyber incident spreads across the financial system: loss of confidence, lack of substitutes for affected services and interconnectedness. Last week's IMF post applied that framework to Mythos, arguing that AI-driven models speed up attacks moving through all three.

Independent Mythos validation slowly coming out

Immediately following the release of Mythos, Anthropic was the only source for claims about what the model could do. Since then, Mozilla and the U.K.'s AI Security Institute have published their own assessments.

Mozilla, the maker of Firefox, said in an

Bobby Holley, the chief technology officer of Firefox, said in the same post there was not a class of vulnerability that humans can spot but the model misses. In other words, the model is "every bit as capable" as elite human security researchers, he said.

The U.K.'s AI Security Institute came to a similar conclusion in an April 13 evaluation. The institute runs simulated corporate networks and directs AI models to attack them, then measures how far each model gets.

Its hardest test breaks the attack into 32 steps, starting with reconnaissance (mapping out the target network) and ending in full takeover. The institute estimates a human security professional needs about 20 hours to complete all 32.

Mythos is the first AI model the institute has seen complete all 32 steps. It did so in three of 10 attempts.

Across all its attempts, the model completed an average of 22 steps. Claude Opus 4.6, the next-best model tested, averaged 16.

What the IMF wants supervisors to do

The prescriptions for supervisors in last week's IMF post draw heavily from the January staff paper that offers the potential policy road map, updated for an AI threat environment.

The fund's post called cyber stress testing, scenario analysis and board-level oversight of cyber risk "indispensable components of financial stability frameworks."

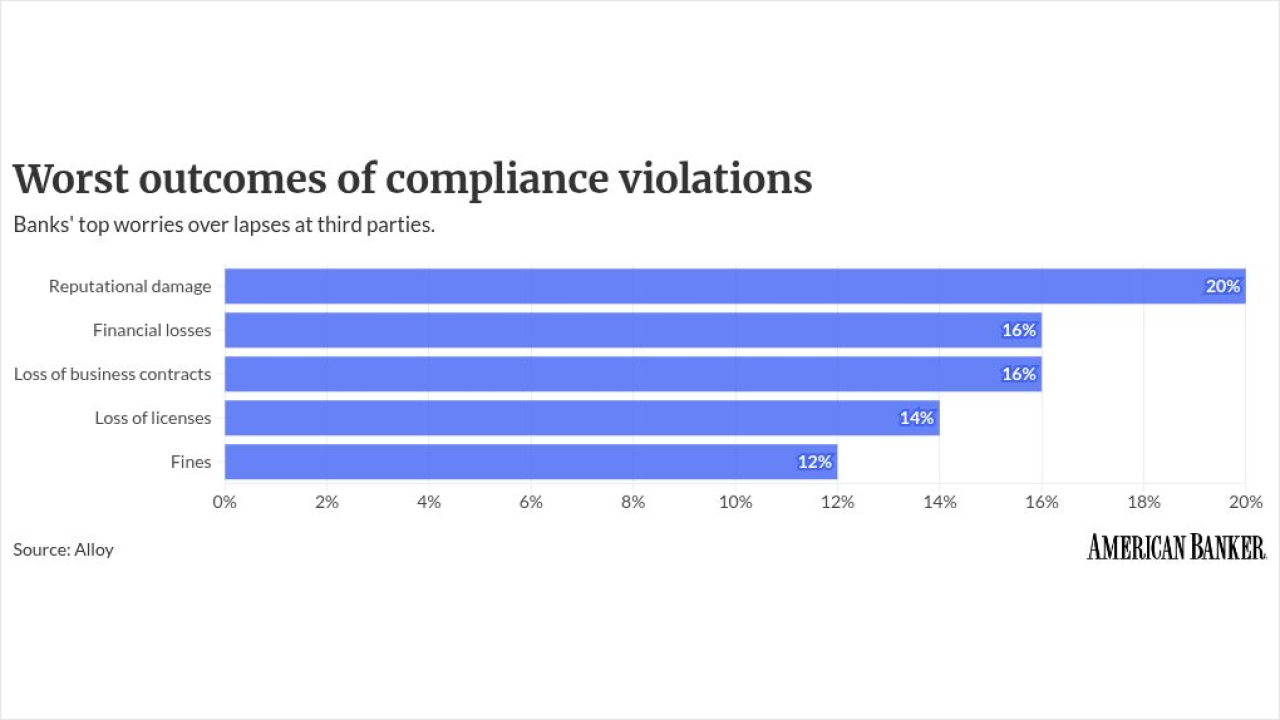

It pushed for tighter oversight of third parties and supply chains, citing concentration in cloud providers, software platforms and AI models as systemic risks.

It urged public-private collaboration on threat intelligence and incident response, and it called for containment controls (network segmentation and similar barriers) to stop a local breach from escalating into a system-wide disruption.

The post also flagged that banks and regulators should deploy AI as a defensive tool, citing OpenAI's

The capabilities of an AI model matter just as much as who gets access to it, according to the fund.

Concentration and cross-border exposure

AI-assisted attacks "can propagate across sectors that rely on the same infrastructure," the fund said, alluding to the concentration risks that financial firms face as vendors consolidate cloud services, core bank software and other markets upon which banks heavily rely.

A bank can harden its own systems and still get hit through one of those consolidated platforms.

Attackers also go for weaker targets, so emerging and developing economies bear more of this risk, the post said. That matters for U.S. banks with correspondent relationships and overseas payment exposure.

The post closes with a question financial authorities will have to answer: Can the global financial system "continue to function under severe stress" posed by systemic risks such as Mythos?

A month after pulling a handful of bank CEOs into a closed-door meeting over the AI model, U.S. supervisors have not published their own guidance.