- Key insights: With AI tech developing quickly, about two-thirds of banks are relying on informal staff training to help employees use new models.

- What's at stake: AI, particularly agentic commerce, poses authentication and other security risks that can be enhanced by an internal learning curve.

- Forward look: Experts suggest experimental sandboxes and the appointment of early adopters to spot experts in using new forms of AI.

As

"Teaching someone to use a new tool is straightforward. Retraining instincts built over 30 years is not," Philip Bruno, chief strategy and growth officer at ACI Worldwide, told American Banker. "AI is becoming the customer. That is the literacy gap most institutions have not closed."

The normal methods of teaching employees how to use technology, such as a tutorial or a training session, don't apply to AI, Bruno said, adding the interactive and fluid nature of new AI quickly makes any static instruction obsolete.

"Most institutions still frame AI literacy as teaching employees how to use new tools. That part matters," Bruno said. "But the bigger gap is that most institutions have not trained the people who process transactions for a world where the buyer is not human."

Nearly two-thirds of banks rely on informal or "on the job" training for AI, according to research from American Banker, noting about a third of banks have formal training programs for AI.

"AI literacy in financial services is still in early innings, but the real bottleneck is friction," Ignacio Segovia, global head of engineering at Altimetrik, told American Banker. "Most banks have rolled out AI capabilities faster than they've addressed the structural barriers that prevent sustained adoption, such as context switching costs, the absence of immediate business-as-usual rewards for core workflows, and the competing demands placed on engineering, product and design teams whose day jobs are already critical to the business."

Who knows?

At a recent panel on

That's mostly because generative and agentic AI are so new that a senior tech-savvy executive does not necessarily know more than an entry level staffer, she said.

"There are some super experienced software engineers who aren't good at using AI," Adam Winiger, CEO of Bank of Bots, a fintech startup that sells AI-powered financial services to developers and entrepreneurs who use generative and agentic AI. Winiger suggests banks use a "sandbox" to let staff test AI, and provide a venue for early adopters to share their experience.

"You want the people who are tinkering with AI, finding out what works and what doesn't work, to be the tip of the spear," he said.

In addition to the fast evolution, AI also poses cultural challenges. "AI is a change agent and it's important to communicate that it's going to make employees' lives better," Rishi Chohan, CEO for the U.S. at GFT, an AI firm whose clients include HSBC and Deutsche. "There is an apprehension among workers at banks that AI will take their jobs."Financial technology companies such as Chime and Block have

AI will displace some jobs, as does most new technology, Chohan said. But for the vast majority it will add new options to provide more information to do their jobs faster, Chohan said.

"AI will bring challenges, but it will make your job much easier. The key is to communicate that to staff when training them," Chohan said. "Ensure them that they're gaining an intelligence companion. They're not learning how to use a program that will make them unnecessary in two months."

What can be taught

The steep learning curve aside, banks are betting heavily on AI. More than half of banks say investing in AI is a high priority, according to

Beyond teaching how to use AI programs, using skills such as prompts, ensuring compliance and fact-checking, learning how to vet the validity of agentic transactions will be a major skill set for banks and payment companies. "

Three things are breaking in agentic commerce that training and strategy need to address, according to Dan Coates, product management director at ACI Worldwide. First, fraud signals are changing. In production environments today, agent transactions behave differently from anything fraud teams have been trained to evaluate, Coates told American Banker. Where a seller has not implemented native AI interfaces, the sessions still exist, but the patterns look nothing like human browsing.

"The agent moves faster, skips steps and interacts with pages in ways that mimic the exact automation we have spent 30 years trying to block," Coate said. "A fraud engine trained on human patterns reads that behavior as an attack and declines it. That is revenue walking out the door."

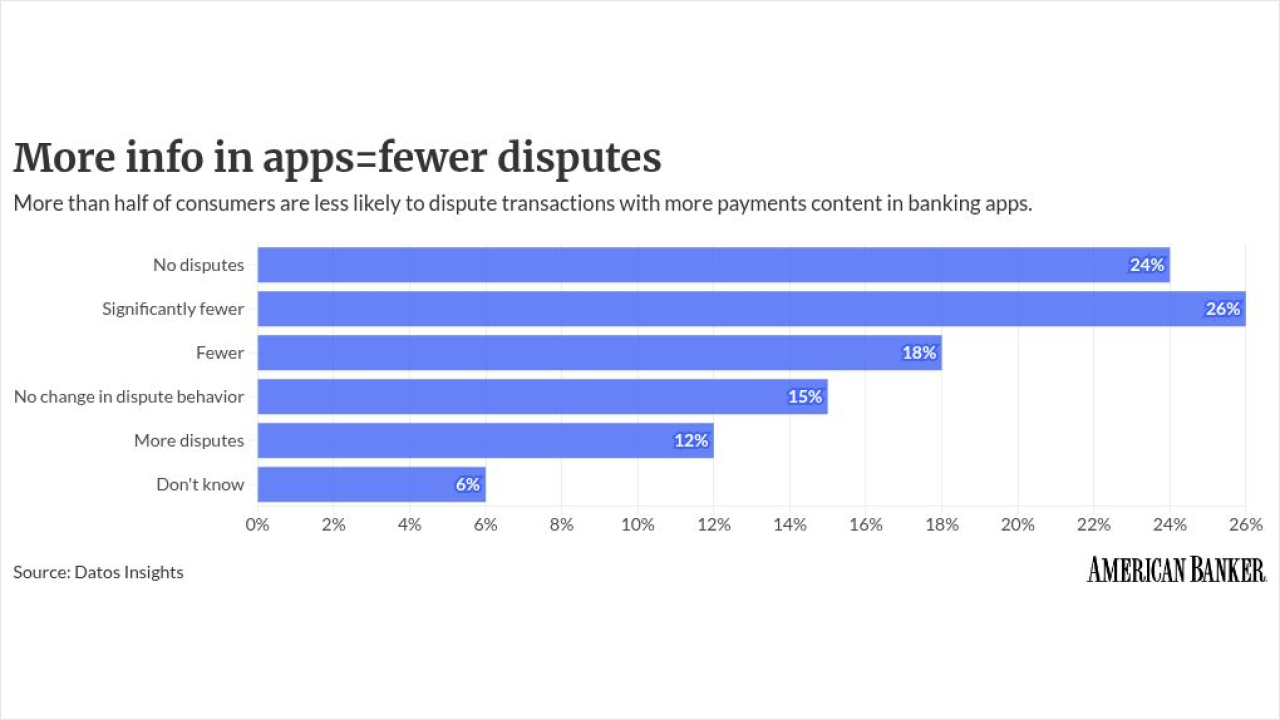

Second, authorization is changing. When a consumer lets an AI agent buy on their behalf, that permission is recorded as a structured set of rules: what it can purchase, how much it can spend, when it expires, Coates said. If the consumer disputes the charge made by an AI agent, that record is the evidence. Dispute teams need to know how to evaluate it in an agentic commerce environment. "At most institutions, very few teams are equipped to do that today," Coates said.

Finally, the rules for managing AI payments are fragmenting, he said. A few competing standards have emerged over the last 18 months governing how AI agents authenticate and pay. Some address the interaction layer, like how agents consume information and services. Others define payment protocols or outline expectations across the entire customer journey. "Visa and Mastercard are building enforcement rules on top of them. Teams that understand the differences will know which agent traffic to accept. Teams that do not will be making blind decisions on transactions they do not recognize," Coates said.

The biggest mistake for banks, merchants and payment companies is treating AI and knowledge of how to use AI as an IT problem.

"Every team that touches a transaction needs this. The institutions building that understanding today will keep their approval rates up and their dispute exposure down," Coates said. "The ones that wait will see it in declining authorizations and chargeback numbers that do not match anything in their models."