Rumblings of discontent about AI deployments at large U.S. banks are reverberating across tech websites.

The complaints suggest some banks are moving too quickly, and executives are promising efficiency improvements that are unattainable.

One big-bank software development leader recently wrote anonymously on a tech website that managing directors are looking for ways to improve efficiency with AI, and that such gains are obviously possible, to an extent, before offering a warning.

"Instead of presenting a realistic picture of what AI can do, everyone is lying, in a blatantly transparent way, that AI can do their job," this person wrote. "Saying that projects scoped to 2 quarters will be done next month … and completely ignoring quality to push out slop."

This user said his team members are pushing out code "that appears to work but only if you click the buttons in the right order," and that he has to re-write code in his spare time.

On Reddit, a software programmer at a large bank wrote anonymously that they were told "embracing AI" is a goal for the year, to which another user replied, "Yes, it is very ironic to be receiving that goal at the same time as trying to fix everything that happened as a result of someone putting AI in a workflow without fully understanding the risks."

To be sure, there have always been and probably always will be disgruntled employees unhappy with the rollout of new technology.

But these complaints about generative AI rollouts feel different. Tech users are describing a feeling of helplessness and despair as bank executives promise far more than AI can realistically deliver, on a vast scale. And of course, generative and agentic AI can wreak more havoc more quickly than technologies of the past.

At banks that have venture investments in AI companies, private equity firms are pushing bank directors "to impose their will to adopt technologies that are not ready for prime time, that have yet to be fully vetted," one former banker, who now works with banks as a tech company executive, said in an interview.

"Someone at the top is like, 'What's $2 million to me, if I can put out this press release that says that I did this cool thing and I can lean into all these hyped-up productivity numbers?' And maybe that feeds a narrative on Wall Street that helps their stock pop up for a day," he said.

Banks are also under pressure from their own shareholders, investors and analysts to become more efficient and show a return on AI investments.

That pressure gets passed on to tech leaders, who are forced to buy and use AI models that have not gone through the normally rigorous levels of due diligence and testing, the former banker said.

"All it's going to take is that one mistake to burn down the village," he said.

Moving too quickly

"There is urgency, there is that competitive pressure," Keri Smith, Accenture's global banking data and Al lead, told American Banker. "There's a lot of innovation that's happening almost every week by ecosystem partners. And so there's a bit of that anxiety regarding, How do I keep up? How do I manage it? What we found to be a good balance on that is always looking at the value equation, and what is this doing in terms of moving my business forward? And how am I actually measuring what success looks like?"

Moshe Sambol, vice president of customer solutions at debugging software provider Lightrun, said it's a sign of how advanced the technology has become that the normally conservative financial industry is embracing AI in everyday engineering work.

"Most tier-one U.S. banks have rolled AI coding tools out to nearly their entire engineering workforces, with JPMorganChase even tying AI usage to performance reviews for its 65,000 engineers," Sambol told American Banker. "So the question worth asking is how these organizations are ensuring that their AI actions are safe, auditable and compliant."

Theo Lau, co-founder of Unconventional Ventures, pointed out that recent press releases seem to outpace what gets deployed in production.

"Some financial institutions are moving at a good pace, while many more are stuck in pilot purgatory," Lau told American Banker. "This is not surprising, unfortunately. There is definitely pressure to show that they are doing something, out of FOMO."

Executives overstating what AI can do

Banks have promised investors that billions of dollars of AI benefits are on the way, but the banks' current use-case portfolios aren't equipped to deliver on that pledge, Evident AI researchers wrote in a newsletter this week.

"That creates even more pressure to prove they can actually take those tools to production," the researchers wrote.

Some bank leaders are confused about what the technology can do, Lau said.

"Sometimes leaders describe generative AI as if it reasons," she said. "In reality, it's pattern-matching. That distinction matters when the output touches a customer's account or a regulatory filing. As someone told me recently, being 80% accurate in banking doesn't mean you get 80% of the value."

When Accenture analyzed banks' AI maturity, it found that only 8% are front-runners seeing a strong return on their AI investments.

Some of these AI leaders have "made a conscious decision to move imperfectly, adjust fast, and change the way they're working," Smith said. "So if I have a hiccup in some parts of the process, that's just part of the journey. How do I continue to make that better? How do I continue to evolve and be able to use it?"

Silent errors in AI-generated code

In Sambol's view, coding agents like Claude Code, Cursor and Gemini "create excellent code. It's why adoption is so high."

But these coding assistants address only the scope of the problem that is described to them, and often lack the bigger picture, which is the context in which the code will run, he said.

"This often leads to unintended consequences due to mismatch with other pieces already in place, systems with which the generated code is expected to work," Sambol said.

Developers often don't understand the AI-generated code, he said. "Developers are putting their faith in code that looks good and seems to address the requirements, but they are not well equipped to diagnose and maintain that code when it misbehaves — for example, when silent errors emerge that can take weeks or months after deployment to be detected," he said.

A silent error doesn't trigger any error messages, crashes or alerts. AI systems often appear healthy while producing flawed results.

"In e-commerce, that can mean the wrong price is shown to customers," Sambol said. "In finance, it can mean credit scores affected, incorrect transactions or compliance failures."

It can take longer to fix code generated by generative AI than it would to take for a human to do the coding from scratch.

"When a developer writes code themselves, they hold the mental model," Sambol said. "They know why they made each choice, where the assumptions are, and how the edges should fail. AI-generated code has none of that. When it breaks in production, debugging becomes a process of reverse-engineering a decision you didn't make."

Sambol sees teams regularly spending more time fixing AI-generated bugs than the original AI coding saved them.

"That downstream cost almost never shows up in the productivity numbers quoted publicly, because the dashboards measure 'lines of code accepted,' not 'hours spent in incident response three weeks later,'" he said.

Inadequate validation

One major challenge is a lack of validation that an AI's coding agent's suggestions will work correctly.

"Because AI is so fast at creating solutions, the validation mechanism has to keep pace, and today, we're attempting to validate AI workflows with observability systems designed for humans," Sambol said. "This wastes the productivity gains of AI in protracted reviews, but also means that failures slip through and only surface when the code is deployed to production and a specific edge case appears."

If this problem is not addressed, banks could suffer incidents such as Amazon's April glitch, in which its autonomous AI coding tools deleted a production environment while attempting to fix a minor bug, resulting in a 13-hour outage at an Amazon Web Services division.

Insufficient validation of AI-generated code creates a resource drain, Sambol said.

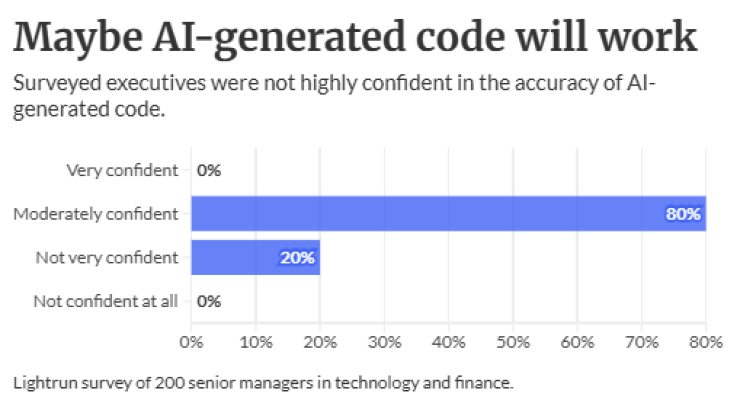

A survey Lightrun conducted found that 39% of finance engineers' weekly working hours are spent debugging and troubleshooting generative AI output code.

"That's more than two days lost a week," Sambol said. "If you think about an engineer's weekly wage, and then multiply that across a bank, you can see how quickly this becomes a hidden, multimillion-dollar tax per institution each year."

People and culture issues

Rolling AI out too quickly can adversely affect people and culture, Lau said.

"Organizations are asking their staff to help them implement systems that will one day replace them," she said. "Unless there is a clear pathway for employees so they can understand where they stand in this new AI era, and if they will still have a job, it's hard to get employees on board."

Some companies mandate the use of AI by asking their teams to check in and submit a report on what they have done with AI every week. "That's a bit too much pressure — and it becomes performative rather than productive," Lau said.

At the other end of the spectrum, Accenture's Smith has seen workers at some organizations ask for AI tools. "I see it as a grassroots effort from the employees to say, 'Can you give me more access to more tools so that I can actually experiment and do more?'" she said.

The rise of AI calls for a new leadership model, Smith said.

"Part of that is, how are people going to have the empathy, the influence, to help bring people from multiple backgrounds, functional areas together and rally people around a vision?" she said. "We're seeing some conversations regarding the leadership blueprint of the future, and what is innately human, and what's going to be needed to be able to operate in this new environment."